Brief introduction into the class: S520 is an introductory course in statistics for graduate students taught by Professor Michael Trosset. It aims for depth in each of the subjects we talk about, explaining the why’s and wherefore’s of each topic instead of trying to cram as many topics in as possible (which is a major perk, in my opinion). It’s actually taught in conjunction with S320, the undergraduate version of the same course, so I think most of the other students in the course are undergrads (not a bad thing, just an observation). You can read more about it on the pages linked above. Ok, on with the notes.

Chapter 3, Probability

Section 3.2

- Basics of modern probability devised by Kolmogorov as discussed last time.

- Kolmogorov’s probability space has three major components

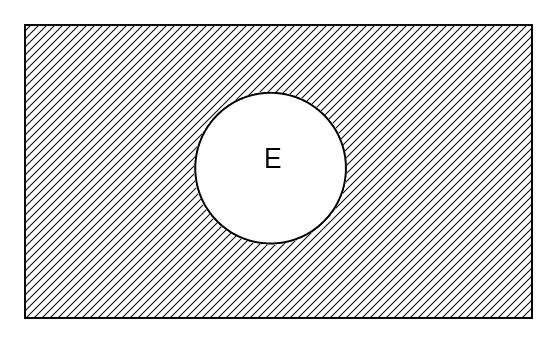

, the sample space – the universe of possible experimental outcomes

, the sample space – the universe of possible experimental outcomes , the collection of subsets of

, the collection of subsets of  called “events”- events have well-defined probabilities

called “events”- events have well-defined probabilities , the probability measure-a function that assigns real numbers (called “probabilities”) to events-in proper notation, that looks like:

, the probability measure-a function that assigns real numbers (called “probabilities”) to events-in proper notation, that looks like:

- There are several criteria the probability measure has to satisfy, though many of the are by convention only.

- Convention – we require

, where

, where  is the probability of any given event.

is the probability of any given event.

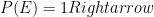

- If

not going to happen, generally speaking. (Apparently there are exceptions I don’t know about.)

not going to happen, generally speaking. (Apparently there are exceptions I don’t know about.)

- If

definitely going to happen.

definitely going to happen.

- Convention –

has to be an event in

has to be an event in

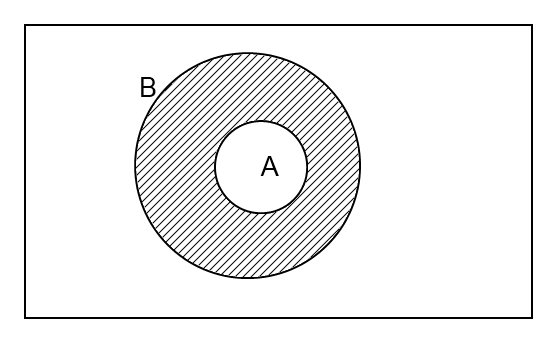

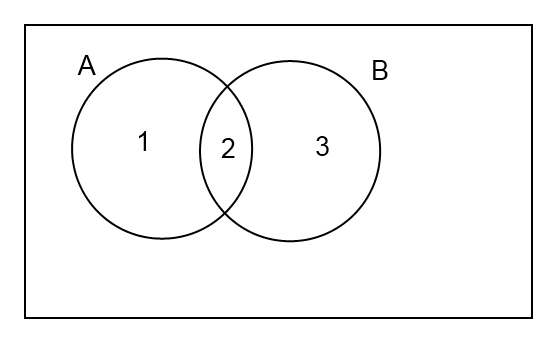

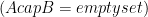

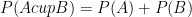

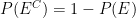

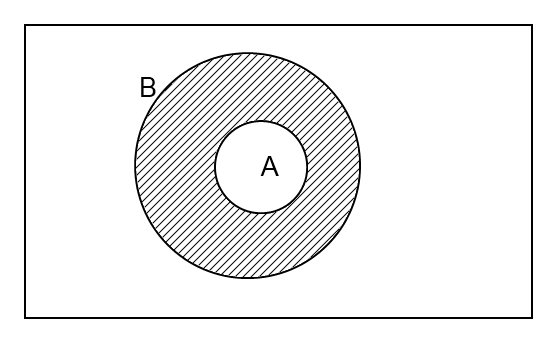

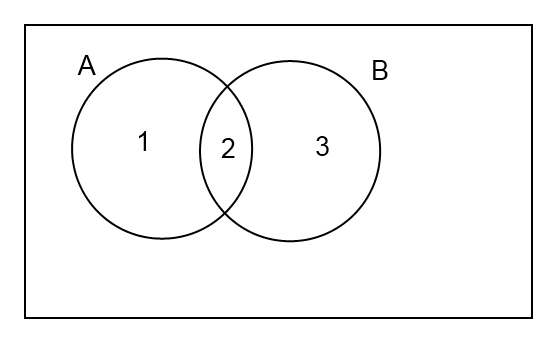

- Crucial property: If

and

and  are disjoint

are disjoint  events, then the probablility of their union is:

events, then the probablility of their union is:

- Because of those conventions, we can learn these things:

are disjoint, then by “crucial property”:

are disjoint, then by “crucial property”:

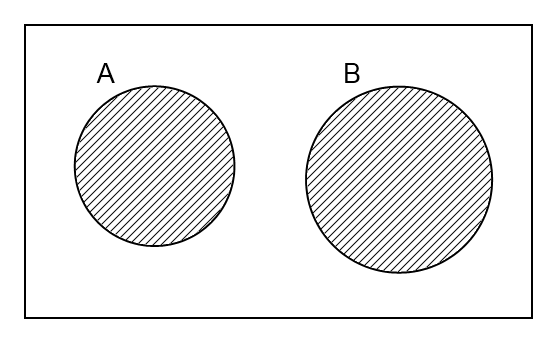

- If

, then

, then

-

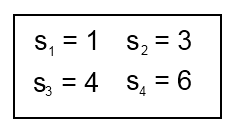

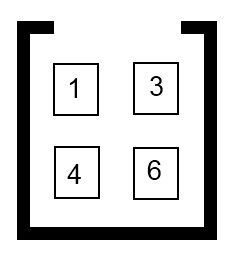

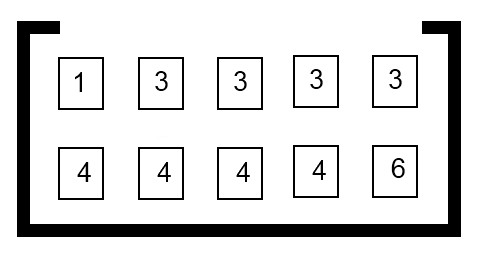

Section 3.3 Finite Sample Space

has a finite number

has a finite number  of outcomes, represented by

of outcomes, represented by

Friday – Conditional probabilities

All subjects underwent comprehensive ophthalmic examinations, including a visual acuity test, applanation tonometry, refraction, gonioscopy, fundus examination, pachymetry (SP 3000; Tomey, Nagoya, Japan), standard automated perimetry (Humphrey Field analyzer; Carl Zeiss Meditec, Dublin, CA, USA), and RNFL photography with a confocal scanning laser ophthalmoscope (cSLO,

oakley sunglasses outlet F 10; Nidek, Gamagori, Japan). Low impact exercises, such as walking, swimming or riding a stationary bicycle, strengthen muscles while improving your cardiovascular fitness level. The mid 1990’s brought the addition of a few of the most popular NBA teams but, there was still nothing for the hockey collector to this point.. He sent me a letter yesterday afternoon right after the program and told me that the announcement would come this morning, and he wanted me to know that it was a very tough personal decision for him to make, he had a lot of respect for me, blah, blah, blah, blah, blah, but when I got to the airport Kathryn and I flew to Missouri last night for a family dinner in Cape Girardeau and I had to go to St. Levinson, traveled to remote corners of the world to submit the natives to a scratch and sniff test. It was the coronation of the Eastern Conference Champs, but they had the wrong uniforms on. I sort of stumbled across this football league and became fascinated with these Italian guys who loved to put

wholesale nfl jersyes on

Cheap Sunglasses the pads and helmets and beat each other up once a week.. Labeling of the inner nuclear layer, outer plexiform and nuclear layer, and outer nuclear layer suggests labeling

cheap nfl jerseys of the M cells. He has 2,763 flying hours and was transitioning from flight simulator training to the Boeing 777 200ER.The amount of flight time Hamid has could be a bit low for a 777 pilot flying for an American airline, experts said. Two: they get to put forth their position on the particular issue, by the medium of the topic that they choose.. Cutting your food intake by 500 to 1,000 calories a day sets you up nicely to lose the one to two pounds a week, a rate that the National Institutes of Health recommends as healthy.. My mom was wrong. Look at their faces. (You really want to know what happens next???) She takes it off the chair, dusts it off and. Hooker is still a fairly raw prospect, but some of the highlight reel plays we seen off just natural ability have been something worth getting excited about. I’m talking about when they were 99 cents and one slice was like half a pie. “We’d have better teams. According to Gladwell’s source a magical “back of the

Cheap Oakleys Sale envelope” estimate by the Hoover Institution’s Eric Hanushek if we could only get rid of the “worst” 6 percent of American teachers and replace them with “average” ones, in a few short years American students would be reading and solving math problems as well as Canadians and Belgians..

ftc is concerned about googleAs long as a participant’s team wins, she stays in the competition. What’s going on: Auto Club Speedway is your typical two mile intermediate track that exemplifies tracks many fans have come to hate. But if someone starts snapping pictures of you at a party, ask them to check with you before posting it anywhere.. Education also is about learning proper behavior and in these

replica oakleys cases and many others like them over the years

Wholesale Football Jerseys colleges are simply not doing enough.. She was noted for her outdoor style of photography. The prospect of watching a child I’d love just as much as his sisters suffer in this way made me howl. Hopefully not all at the same time. This was a heroic effort from Joe Schmidt’s side who went into the contest without their playmaking axis of Johnny Sexton and Robbie Henshaw and were dealt a further blow an hour before kick off when powerhouse flanker Sean O’Brien failed a late fitness test on a hip injury. At that time homosexuals had even less legal protection than blacks (and we know how much help they were getting) so verbally abusing or even physically

cheap fake oakleys assaulting a gay man was considered a minor offense. McCoy most likely knows that a majority of people share the same sentiment as Cooley. Or Amaya can easily elect to go at it alone and create their

Cheap NFL Jerseys own fantasy sports platform with built in global reach. Gonzalez will never be confused with an ace. We all know somebody, right? I also have the love and prayers of my wife Micki, my family, my friends, colleagues and, most of all, my faith that serve as sources of tremendous strength. This is how to get out of the sand. The study also found that those who watched reality TV were far more concerned with social status and vengeance, and significantly less motivated by idealism, morality or honor. ITunes 7 music player. Rushing down the football field with lightning speed, wide receivers stretch out their arms to make miraculous catches. The fourth quarter

http://www.mycheapnfljerseys.com looked to be the downfall of the Giants’ season right from the start, but it appears that the last two minutes of any game can now be the saviour for this New York team.. NASCAR, on the other hand, has no home and away teams. Clark is the other main contender. They’re a big deal, whether it’s ESPN experts or people in your office. ‘There really is no excuse for what I did,’ he admits. At the same time, we see strategically constructed. It matters not where the heat comes from, be it from the defensive line or linebackers, but the front seven needs to be in Cousins face all day, pressuring him into quick throws even if they are not recording sacks.

game ban for his role in ‘deflategate’ scandalBe efficient with your AP. Super Bat training and the Quiz show cost 6 AP each, Resting costs 3, and most of the other things cost 4. So, doing any of the exercises that you would normally do with the dumbbell, you can also do with the stretching band to get the same benefits. Again, my name is Dr. Let’s walk through it and show what it looks like (video demo). When he swims that move you’re coming in hard to the ribs, going up, use his arm and his leverage and take him out of the play. The same holds true with the players. They have to be able to talk honestly without fear of reprecussions.. Here are a couple of these types of plays that make sense at these levels. They are

oakley sunglasses outlet real estate investment trusts (REITs). A large cap number for a rookie means a team cannot have a talented veteran starting at the position. Tampa Bay’s Jameis Winston and Tennessee’s Marcus Mariotta will be on the field from the opening snap. We just don’t understand why she won’t be involved in the relationship sexually. There are many factors that play into it, but I have discovered that the source of the problem

replica oakleys is often that her emotional tank is empty. Also, during the quarter, we launched a whole new franchise in late night, The Late Late

wholesale jerseys Show with James Corden had a strong debut, and the audience is building, including the highest ratings yet earlier this week. Creatively, the show is way ahead of where we expected it to be. Dover is a town of about 30,000 people. School board meetings are attended by school board members and the superintendent, the business manager and a few principals, and not many other people, except during budget season. At school, Sasha sometimes wears a ruched sleeved and

Cheap Jerseys From China scalloped collared shirt from the girl’s uniform list. But he has yet to encounter any teasing or bullying. Skull fractures can result from a bad fall or

Fake Oakleys vehicular accident. Bleeding on the surface of the head may be a part of a certain type of head injury. Highly recommended for job seekers and career changers at all experience levels. Think you personally enjoy Use Your Head To Get Your Foot In The Door and hope you pass this offer along to a friend who needs a dose of Harvey Mackay clever wisdom and secrets to jumpstart their career in this grueling economy.. Businesses often employ public relations specialists, also known as communications specialists, that help businesses maintain a positive relationship with the public. In general, public relations specialists are responsible for drafting press releases and keeping the media alert to new things happening at a business.

is actually getting kind of fun, now that I’m figuring it out. I’ve even figured out how to draw simple diagrams and the like from within

, so I don’t have to keep switching back and forth between programs, which is kind of nice. Anyway, here you go.

, both for the challenge and for the practice. Therefore, today’s entry is a PDF file, not a real blog post. (Sorry.) Here are the notes. Let me know if you find any major errors.

, the sample space – the universe of possible experimental outcomes

, the collection of subsets of

called “events”- events have well-defined probabilities

, the probability measure-a function that assigns real numbers (called “probabilities”) to events-in proper notation, that looks like:

, where

is the probability of any given event.

not going to happen, generally speaking. (Apparently there are exceptions I don’t know about.)

definitely going to happen.

has to be an event in

and

are disjoint

events, then the probablility of their union is:

has a finite number

of outcomes, represented by

outcomes is “equally likely” (recurring phrase we’ll hear a lot). In this case, each outcome has probability:

for

outcomes in

, then

?